The three specific automations that justified my Cursor subscription — with the exact prompts I used and the mistakes that wasted my first attempts.

Content mode: Tested

Two months ago I wrote about setting up Cursor as a non-developer freelancer. Since then, three scripts have survived into weekly use. Together they save me roughly two hours per week on tasks I used to do by hand: cleaning messy client CSV exports, renaming deliverable files into organized folders, and pulling public pricing data into comparison spreadsheets.

None of these required coding knowledge to build. Each one took under 30 minutes with Cursor’s AI assist. But each one also failed on the first attempt because I made the same prompting mistake — and fixing that mistake is the most useful thing I can share.

3 Scripts — Time Savings Summary

CSV Cleaner: 15 min → 10 sec per file, ~8 files/week

File Renamer: 20 min → 3 sec weekly sort, 90% auto-sorted

Pricing Scraper: 35 min → 20 min per comparison, ~2/month

Combined weekly savings: ~2 hours

Script 1: The CSV cleaner that handles every client’s export format

The problem: I get CSV exports from four different clients — HubSpot, Salesforce, Google Sheets, and one client who apparently uses a custom CRM from 2014. Every export has different column names, date formats, and encoding quirks. Manually normalizing these for analysis used to take 15–20 minutes per file, and I process about eight files per week.

The Cursor prompt that worked:

“

Write a Python script that reads a CSV file, detects the delimiter

automatically, standardizes column names to lowercase with underscores,

converts all date columns to YYYY-MM-DD format, removes completely empty

rows, and saves the output as a clean UTF-8 CSV with the suffix “-clean”.

“

The script handles my four client formats without modification. I drop a CSV into the folder, run the script, and get a cleaned version in under two seconds. Time per file went from 15 minutes to about 10 seconds.

The mistake I made first: my initial prompt was “clean up my CSV files.” Cursor generated a script that assumed a specific column structure. When I fed it a different client’s export, it crashed. The fix was being explicit about what cleaning means — automatic delimiter detection, lowercase columns, date normalization — instead of assuming Cursor would infer my needs.

Script 2: The file renamer that sorts deliverables by client and date

The problem: by Friday each week, my Downloads folder has 30–40 files — client feedback PDFs, reference images, revised drafts, invoices. I used to spend 20 minutes every Friday dragging files into Client Name/YYYY-MM/ folders. Miss a week and the backlog doubles.

The prompt that worked:

“

Write a Python script that scans a folder for files, identifies the

client name from the filename or parent folder name using a lookup dict

I’ll provide, moves each file to a target directory structure of

ClientName/YYYY-MM/ based on the file’s modification date, and logs

every move to a JSON file. Skip files that don’t match any client pattern.

“

I added a simple dictionary mapping filename patterns to client names (e.g., files starting with “acme” go to the “Acme Corp” folder). The script runs in about three seconds and correctly sorts 90% of files. The 10% it skips — files with ambiguous names — I sort manually, which takes two minutes instead of twenty.

The mistake I made first: I asked Cursor to “organize my files intelligently.” The AI tried to use NLP to detect client names from file content, which was absurdly over-engineered for my needs. A simple pattern-matching dictionary was all I needed. Lesson: tell Cursor the simplest approach that would work, not the smartest one.

“Tell Cursor the simplest approach that would work, not the smartest one.”

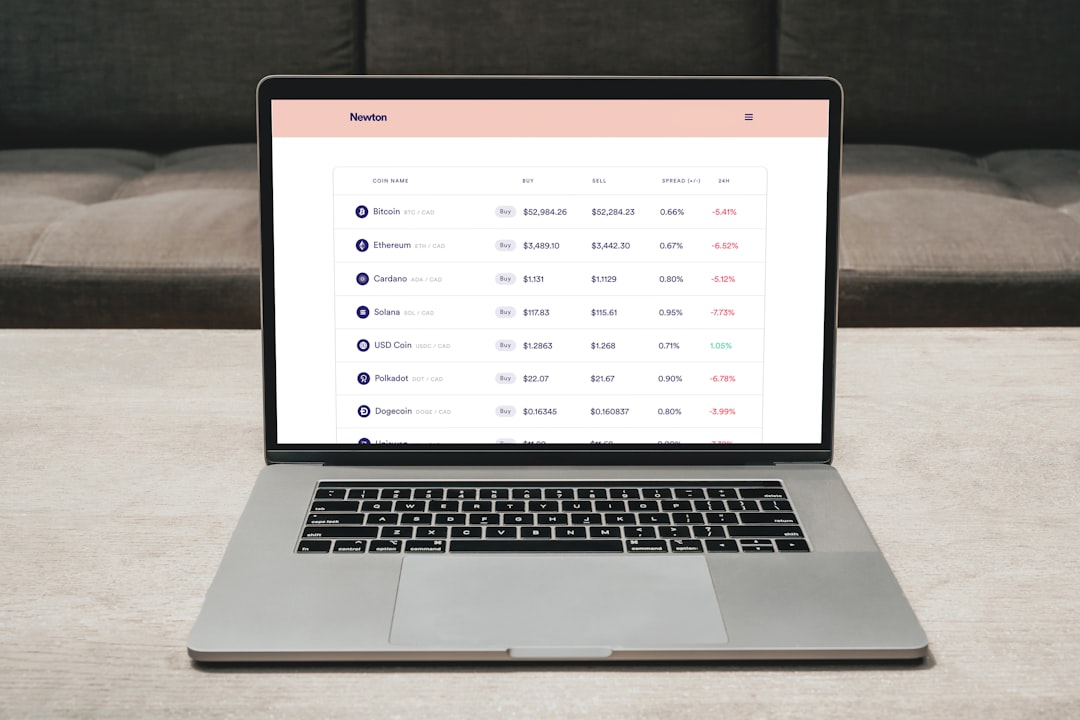

Script 3: The pricing scraper that feeds my comparison spreadsheets

The problem: for competitive analysis deliverables, I need current pricing from 5–10 SaaS tools. Manually visiting each pricing page, noting the tiers, and entering them into a spreadsheet takes 30–40 minutes per comparison. I do roughly two of these per month.

The prompt that worked:

“

Write a Python script that takes a list of URLs from a JSON file,

fetches each page’s HTML, extracts text content from pricing-related

sections (look for elements containing “pricing”, “price”, “plan”,

“month”, “$”), and saves the extracted text to a timestamped JSON file

with the URL as the key. Use requests with a 10-second timeout and skip

URLs that return errors.

“

This one is the roughest of the three — it doesn’t parse pricing into structured data, it just extracts the relevant text sections. But that’s enough. I review the extracted text, pull the numbers into my spreadsheet, and verify against the actual page. The extraction step saves about 15 minutes per comparison because I’m reading pre-filtered text instead of navigating through marketing pages.

The mistake I made first: I asked for a script that would “extract and compare pricing automatically.” Cursor generated something that tried to parse dollar amounts, tier names, and feature lists into a structured table. It worked on two out of ten sites and hallucinated data on the rest. The simpler approach — extract raw text and let me do the interpretation — is less impressive but actually reliable.

The prompting pattern that fixed all three scripts

The common mistake across all three first attempts: I described the outcome I wanted instead of the mechanism I needed. “Clean up my CSVs” vs. “detect delimiter, lowercase columns, normalize dates.” “Organize my files” vs. “match filename patterns to a lookup dictionary.” “Compare pricing” vs. “extract text from pricing-related HTML elements.”

Cursor’s AI is good at writing code for well-specified tasks. It’s mediocre at inferring what you actually need from vague descriptions. As a non-developer, my instinct was to describe the problem and let the AI figure out the solution. That instinct was wrong.

The pattern that works: describe the input (what the script receives), the transformation (the specific operations, in order), and the output (what the script produces and where it saves). Skip the “why” — Cursor doesn’t need context about your workflow, it needs technical specifications.

What I’ve learned about Cursor’s limits for non-developers

After two months of weekly use, here’s where Cursor excels and where it struggles for someone without a coding background:

Works well: File manipulation, data formatting, text extraction, pattern matching. Anything where the input and output are clearly defined files. I’d estimate 80% of my automation needs fall into this category.

Works poorly: Anything requiring ongoing interaction — scripts that need user input during execution, tools that should run in the background, or automations that depend on third-party APIs with authentication. I’ve tried building a simple email parser and a Notion integration, both of which required troubleshooting I couldn’t do without understanding the error messages.

The maintenance question: Scripts break when the inputs change. My CSV cleaner needed one update when a client changed their export format. The file renamer needed a new pattern when I onboarded a new client. Each fix took about 10 minutes in Cursor. But I can see a future where maintaining ten scripts becomes its own time sink. For now, three scripts is manageable.

For me, Cursor Pro justifies its $20/month with exactly these three scripts. Two hours saved per week at my rate covers the subscription several times over. But the value is concentrated — remove any one of the three and the math gets tighter.

The uncertainty is whether I’ll find the fourth and fifth scripts that keep the ROI growing, or whether I’ve already picked the easy wins and the remaining automation opportunities require more technical skill than Cursor can bridge. I’m giving it one more quarter to find out.

If you’re a non-developer freelancer considering Cursor, start with your most repetitive file-handling task. Write the prompt using the input-transformation-output pattern. Build one script, use it for two weeks, then decide if it’s worth continuing. Don’t try to automate everything at once — the first three wins will tell you whether Cursor fits your workflow.

FAQ

Do I need to know Python to use these scripts?

No. I don’t write Python — Cursor generates it. I describe what I want, Cursor writes the code, and I run it. When something breaks, I paste the error message back into Cursor and ask it to fix the issue. That said, a basic understanding of file paths and command-line execution helps. I spent about an hour learning those basics in my first week.

Can I build these same scripts with ChatGPT instead of Cursor?

Yes, but with more friction. ChatGPT generates code in the chat window that you then copy into a file, save, and run manually. Cursor lets you generate, edit, and run the code in one environment. For a single script, the difference is minor. For iterating on a script that doesn’t work on the first try, Cursor’s integrated workflow saves significant time.

How do I run these scripts on a schedule?

It depends on your operating system. On Mac, I use cron jobs — Cursor helped me set those up too. The CSV cleaner runs every time I save a file to a specific folder. The file organizer runs every Friday at 5pm. The pricing scraper I trigger manually because I only need it for specific projects.

What happens when a script breaks?

Not yet a major issue for me. In two months, I’ve had three breakages: one from a client changing their CSV format, one from a website redesigning their pricing page, and one from a Python update. Each took 5–15 minutes to fix by pasting the error into Cursor and asking for a correction. If breakages become more frequent as I add scripts, I’ll reassess.

Sources

AI-assisted research and drafting. Reviewed and published by ToolMint. Last updated: 2026-04-25.