GPT-5.5 Just Dropped — Here’s What the Numbers Say Before I Test It Against Claude Code

Content mode: Informed — Field Report

I haven’t used this yet, but here’s what the data shows. OpenAI released GPT-5.5 yesterday (April 23, 2026), and as someone who already pays for both Claude Pro and ChatGPT Plus — about $40 a month to keep two AI writing tools open on my desktop — my first question wasn’t whether it’s “smarter.” It was: does this change which tool I leave open on which monitor?

I’m not a developer. I write client deliverables — proposals, brand strategy docs, research briefs — and I use Cursor for the small scripts that clean up data between calls. I’ve been watching Claude Code from the outside the way most solo operators do: curious about where the coding-agent frontier lands, because when it lands well, it eventually trickles down to the tools non-devs like me actually use.

So — what’s actually new in GPT-5.5, and where might it pressure Claude Code?

What OpenAI actually shipped

The release lands with concrete benchmark numbers, which is the first honest thing I’ll say about it: this isn’t a vibes announcement.

- Terminal-Bench 2.0: 82.7% — the “operate the computer” benchmark, not just write code

- SWE-Bench Pro: 58.6% — real software engineering tasks, the harder cousin of SWE-Bench

- Codex context window: 400K tokens inside the coding environment; 1M tokens via the API

- Token efficiency: OpenAI says GPT-5.5 “uses fewer tokens in Codex tasks” than GPT-5.4 at comparable quality

- Latency: roughly matches GPT-5.4 per-token despite the capability gains

Pricing for API access:

| Model | Input / 1M tokens | Output / 1M tokens |

|---|---|---|

| gpt-5.5 | $5 | $30 |

| gpt-5.5-pro | $30 | $180 |

Fast mode is about 1.5x faster at 2.5x the cost. Availability: rolling out to Plus, Pro, Business, and Enterprise in ChatGPT and Codex. GPT-5.5 Pro is Pro/Business/Enterprise only. API access “very soon.”

The framing from OpenAI is explicit: this is a bet on agentic work. Greg Brockman called it “a real step forward towards the kind of computing we expect in the future.” Per TechCrunch’s reporting, OpenAI is openly talking about bundling ChatGPT, Codex, and an AI browser into a single “super app” for enterprise customers.

Why the Claude Code comparison matters now

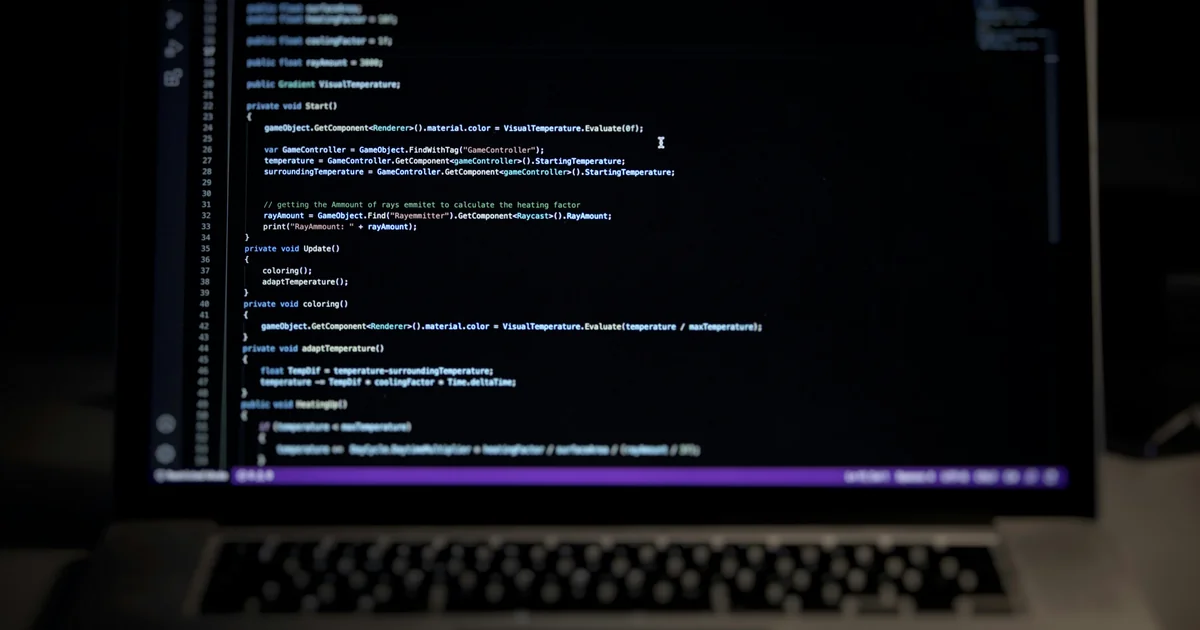

Claude Code, as it stands while I’m writing this, is Anthropic’s agentic coding CLI that sits on Claude Opus. It’s what developers reach for when they want an AI to actually do work in the terminal — run commands, edit files, chain steps, return a diff. It’s had roughly a year to earn trust among people who care about that loop behaving predictably.

GPT-5.5 in Codex is now the direct rival to that workflow, not just ChatGPT-the-chatbox. That’s the shift.

Two things jump out before I’ve run a single side-by-side:

First, 82.7% on Terminal-Bench 2.0 is the real claim. A coding chatbot can ace SWE-Bench in a controlled loop. Getting 82.7% on “use the computer like a person” is a different problem — navigating folders, reading error messages, recovering from the model’s own bad decisions. If that number holds up in the wild, it’s the first time I’d credibly hand a messy local task to an OpenAI agent and not feel like I was gambling.

Second, 400K Codex context is enough for real work. The friction I’ve watched developer friends hit with agentic coding tools is rarely “the model is dumb.” It’s “I had to re-paste context twice because the window filled up.” 400K is generous enough that a mid-sized project fits without stunting the loop.

What I’d want to know before reading further into the hype: how GPT-5.5’s agentic loop handles refusing to do things it isn’t sure about. Claude Code’s tuning leans cautious — it stops and asks when the plan could destroy your repo. The “super app” framing from OpenAI worries me slightly in the opposite direction. A confident, wrong agent is worse than a cautious, slow one.

Where I predict GPT-5.5 will pressure Claude Code

Trying to be specific instead of making a vibes call. Three places I expect GPT-5.5 to put heat on Claude Code in the next few months:

- One-off computer tasks. “Open this folder, rename 300 files based on a CSV, verify the output.” The Terminal-Bench 2.0 score suggests GPT-5.5 is built for exactly this. For me, that’s the weekly client-deliverable packaging job that currently eats 40 minutes in Cursor.

- Research workflows that blend code and writing. OpenAI is explicitly pitching scientific and technical research. “Write a Python script to scrape data, then draft the summary memo” is a natural fit when both halves live in one model. Claude Code is excellent at the code half; Claude.ai is excellent at the writing half; having both in one workflow has always required switching windows.

- Solo operators who already live in ChatGPT. If you pay for Plus and use Claude only occasionally, GPT-5.5 inside Codex removes a reason to keep two subscriptions. The gravitational pull toward consolidation is real when your monthly AI spend is already past $100.

Where I predict Claude Code keeps the edge

- Long-form writing judgment. Speculation, but Claude’s emphasis on writing quality has held across four or five model generations. GPT-5.5 has to prove it’s closed that gap on long deliverables, not just matched benchmark scores.

- Cautious refusal behavior. When I don’t want an agent to take a wild swing at my files, Claude still feels like the safer default.

- The next Anthropic move. GPT-5.5 Pro at $30/$180 per million tokens is aggressive pricing for a frontier model. The real question is what Anthropic ships next — and whether yesterday’s announcement pressures them to move the Claude Code story forward faster.

What I’m actually going to do this week

I’m not switching defaults on client work. The cost of getting a deliverable wrong is higher than the cost of running two subscriptions for another month.

What I will do:

- Run the same 4,000-word brand strategy draft prompt through GPT-5.5 and my current Claude workflow. Compare coherence around sections 4-5, where ChatGPT has historically lost the thread for me.

- Hand GPT-5.5 inside Codex a real file-rename-by-CSV task I’d normally do in Cursor. Measure wall-clock time and whether I had to correct anything.

- Wait two weeks. First-week benchmarks always lead; first-month behavior is what actually matters.

I’ll write that up with real numbers when I have them. Until then, treat this as what it is: a public-sources Field Report on a model I haven’t run.

FAQ

Is GPT-5.5 available in the ChatGPT Plus tier I already pay for?

Yes. OpenAI confirmed rollout to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex. GPT-5.5 Pro (the higher-tier version) is Pro, Business, and Enterprise only.

Does GPT-5.5 replace Claude Code for agentic coding?

Not yet, and probably not for everyone. Claude Code’s strength isn’t raw benchmark — it’s the loop’s tuning and a year of field use. GPT-5.5’s 82.7% on Terminal-Bench 2.0 is the first time OpenAI has credibly entered that space head-on, but “best on paper” and “best to live with” aren’t the same thing.

How does GPT-5.5 pricing compare for a solo operator?

At $5 input / $30 output per million tokens, standard GPT-5.5 isn’t the cheapest frontier model on the market, but it isn’t the most expensive either. Unless you’re burning through millions of tokens a week, the subscription tier matters more than API pricing — and for most freelancers, that means ChatGPT Plus at $20/month.

Should I cancel Claude Pro?

Not based on benchmarks alone. I’m keeping both for at least another billing cycle. If you already use ChatGPT more, this release gives you cover to consolidate. If you use Claude primarily for writing, give it a month before moving anything.

Sources

- OpenAI releases GPT-5.5, bringing company one step closer to an AI ‘super app’ — TechCrunch

- Introducing GPT-5.5 — OpenAI

- OpenAI announces GPT-5.5, its latest artificial intelligence model — CNBC

- OpenAI Releases GPT-5.5: Stronger Agentic Coding, Knowledge Work, and Research — Knightli

AI-assisted research and drafting. Reviewed and published by ToolMint.